“Meta spent 70 billion dollars on the metaverse and achieved absolutely nothing.”

That’s an easy joke to make. But it’s also not really true.

That number includes years of R&D across multiple VR headsets, several generations of smart glasses, AI systems, and experimental AR hardware. And eventually, all of that led to this: Meta Ray-Ban Display – smart glasses with an actual built-in display that normal people can buy and wear outside without looking completely ridiculous.

Well… mostly.

I spent two weeks using these glasses as my primary wearable gadget. I wore them everywhere, tested every feature I could, accidentally got addicted to Instagram Reels, and tried to answer one simple question:

Are display-equipped smart glasses finally useful for regular people?

The answer is complicated.

From Project Orion to Consumer Glasses

About a year ago, Meta privately demonstrated Project Orion to journalists and creators – true augmented reality glasses with dual displays and an external compute puck carried in your pocket.

Unlike current smart glasses, Orion wasn’t just about notifications or cameras. The goal was much bigger: replacing your smartphone entirely while layering digital interfaces directly onto the real world.

The demos looked incredibly cyberpunk.

But realistically, Orion was never meant to become a mass-market product anytime soon. It was mainly there to prepare investors and consumers for devices like the Meta Ray-Ban Display – the first public-facing Meta glasses with an actual display system designed for everyday use.

And honestly, this strategy seems to be working.

While Meta hasn’t revealed exact sales numbers for the display model, Meta and Ray-Ban reportedly sold around 7 million smart glasses overall last year. Most were the cheaper display-less versions (I own Gen2 Wayfarer myself), but the trend is obvious: people are slowly warming up again to the idea of wearing computers on their faces.

Yes, we’ve basically come full circle back to Google Glass.

Design and Hardware

The first thing you notice about the glasses is the frame.

It’s thick. Very thick.

Compared to earlier Ray-Ban Meta models, the display version looks noticeably bulkier, especially around the right lens where the waveguide system and display hardware are located.

The weight increase is also immediately noticeable. Meta even added rubber nose pads to compensate for the extra pressure on your face during long sessions.

The classic Ray-Ban branding is also partially replaced by prominent Meta logos, making the glasses feel slightly less stylish and slightly more like prototype developer hardware.

That said, they’re still far more socially acceptable than most AR headsets currently on the market.

Cameras Became Much Better

Like the cheaper non-display models, the glasses can instantly capture photos or record up to three minutes of video using the built-in camera.

But the addition of a display changes the experience dramatically.

For the first time, you can actually see what the camera is pointed at while recording. That sounds minor until you’ve experienced using camera glasses without any framing reference whatsoever.

With previous Meta smart glasses, I constantly recorded moments only to later discover I was aiming slightly too far left or right because the camera perspective doesn’t perfectly match human eyesight.

Now you can correct for that in real time.

The glasses also introduce 3x zoom support during recording, controlled using Meta’s new gesture bracelet.

And surprisingly, the bracelet might be the most impressive piece of technology here.

The Gesture Bracelet Is Weirdly Excellent

At first glance, the included bracelet looks like an old fitness tracker from 2014.

No display. One button. Magnetic charging dock.

But once connected to the glasses, it becomes one of the most intuitive input systems I’ve used in wearable tech.

Navigation works through subtle finger gestures:

- Swipe your thumb for directional movement

- Tap your thumb and index finger to confirm

- Use your middle finger gesture to go back

- Rotate your wrist for sliders like brightness or volume

There’s even experimental finger-writing support for text input, though it’s still not fully released.

The impressive part is how quickly your brain adapts. After a few minutes, the gestures become almost subconscious. You stop making exaggerated movements and start controlling the interface with tiny finger motions that nobody around you even notices.

Your hand can rest on a table, stay in your pocket, or even sit behind your back – the tracking still works.

Ironically, one place where gestures don’t work properly is while driving. Which is honestly a good thing because these glasses are distracting enough already.

And yes: please do not use smart glasses while driving.

Using Them in Public Feels Extremely Strange

One of my first experiences with the glasses happened while waiting for my wife at a restaurant.

I opened Instagram and started scrolling Reels because that’s apparently what modern humans do during any free 30-second interval, at least according to Meta.

Later, my wife told me she immediately spotted me from across the room because I looked completely insane.

Everyone else was looking down at their phones. Meanwhile, I was sitting perfectly upright, staring motionlessly at a wall with a completely expressionless face while silently consuming social media content floating inside my right eye.

The future apparently makes us look like psychopaths.

Still, I also used the glasses to call through WhatsApp through the built-in interface, and interestingly nobody around me reacted at all. So socially, we may already be entering the normalization phase of wearable computing.

Software Limitations Everywhere

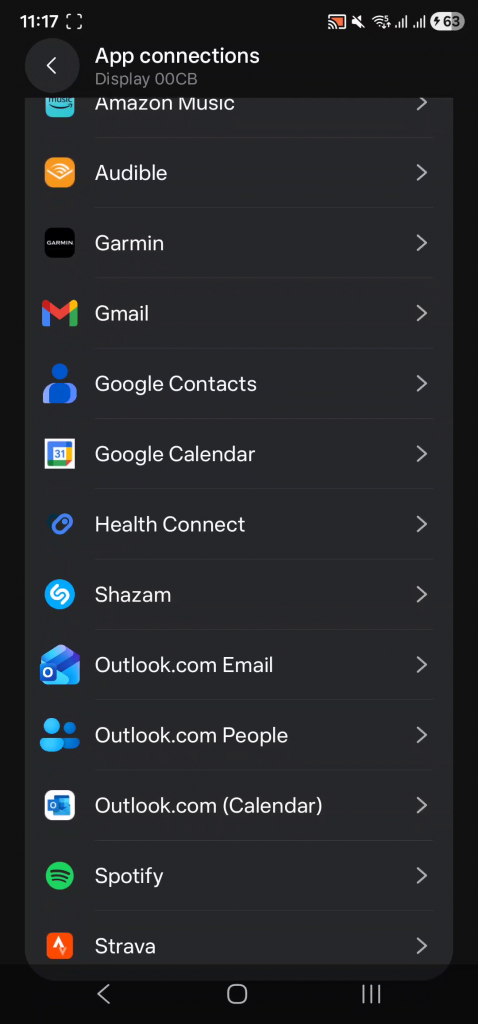

Outside of Meta’s own ecosystem, software support remains extremely limited.

You can connect some third-party apps from your phone, but most integrations feel shallow. Microsoft Outlook is one of the few genuinely useful additions, though replying to emails still relies entirely on voice dictation.

Recent firmware updates added support for phone notifications from non-Meta apps like Slack and Discord, but functionality is frustratingly incomplete. You still can’t fully read long messages or comfortably respond to them.

There are also:

- navigation features

- weather widgets

- stock widgets

- simple games like 2048

- live captions

- a teleprompter mode

- translation tools

The teleprompter is genuinely useful. You upload text through the mobile app, adjust speed and font size, and read directly from the display while appearing far more confident and prepared than you actually are.

The translator, however, currently supports only a handful of major languages.

And bizarrely, because the glasses are officially focused on the US market, some widgets still don’t properly support Celsius instead of Fahrenheit.

Meta AI: Impressive and Slightly Terrifying

Naturally, Meta heavily pushes its AI assistant inside the glasses.

You can ask what you’re looking at, request recipes based on visible ingredients, set reminders, call contacts, or ask random trivia questions. The experience itself works surprisingly well.

For example, I asked the glasses what I could cook using random ingredients on a table. Meta AI suggested marinating meat in Gatorade while correctly advising against eating toilet paper.

So at least we’ve progressed beyond the era where AI recommends adding glue to pizza.

The bigger concern is privacy.

Investigations previously revealed that Meta partnered with Sama in Kenya to analyze user-captured footage for AI training purposes. Meta later ended that partnership, but that was an effort to kill the leak, rather than fix an issue. The broader concern remains unchanged: smart glasses with always-available cameras and AI assistants inevitably raise uncomfortable questions about data collection and surveillance. And unfortunately, disabling AI functionality also removes many of the glasses’ most useful features.

Battery Life Is Still Bad

There is still a lot of work to be done, before glasses would become THE device for everyday use. And longevity of use sessions is a major one. The display significantly impacts battery life. Under active usage, the glasses struggle to last more than two hours.

Thankfully, the charging case itself improved substantially. Unlike the bulky older Meta smart glasses case, the new one folds into a compact wallet-like shape that’s dramatically easier to carry around daily.

The gesture bracelet lasts far longer – usually two or three days per charge.

The Biggest Problem: There Still Isn’t Much To Do

This became the core issue after the initial excitement faded. Once the novelty wore off, I found myself forcing myself to wear the glasses.

Not because they were uncomfortable.

Not because the technology was bad.

But because the ecosystem still feels incomplete.

You still can’t browse the web. You can’t watch YouTube, read Reddit, or full-featured messaging apps. Text input remains awkward. Most apps feel like simplified companion widgets rather than true standalone experiences.

And unless you’re deeply invested in Instagram, there simply isn’t enough compelling daily content to justify wearing the glasses constantly. I have never used Instagram myself before starting to use Meta Displays, and starting an empty account for immediate usage of Reels. Well, that was a horrifying experience by itself.

At times, the device feels less like the future of computing and more like a preview build of the future.

An extremely impressive preview build.

But still a preview.

Final Thoughts

I’m genuinely glad devices like Meta Ray-Ban Display exist.

Even with all their flaws, they represent something important: the first believable attempt at turning cyberpunk-style wearable computing into a mainstream consumer product.

And within the next few years, competitors from Samsung, Apple, Xiaomi, and many others will almost certainly flood the market.

Nobody wants to miss what could eventually become a trillion-dollar industry.

But right now, these glasses are still early-adopter hardware.

They’re for people who desperately want to experience the future before it’s fully ready. Or they are so addicted to Instagram, they cannot live without it.

Everyone else should probably wait for the next generation. Or something that doesn’t bear Meta’s logo.